Agentic AI in banking represents a paradigm shift, from simple task automation to autonomous decision-making systems that fundamentally reshape competitive dynamics in financial services. While global banking technology spending reached $650 billion in 2023—roughly equivalent to Belgium’s GDP—productivity at US banks has been declining by 0.3% each year since 2010, creating an urgent imperative for transformative AI adoption.

McKinsey research shows that agentic AI systems unlock the full potential of vertical use cases by automating complex business workflows. Early banking implementations indicate more than 60% potential productivity gains and savings of over $3 million annually.

In this article, you will learn about specific applications of agentic AI in banking, the challenges and risks, the latest trends shaping the industry, and an adoption roadmap for banks—supported by Neontri’s insights and recent market research.

Key takeaways:

- Agentic AI systems in banking deliver significant productivity gains and substantial annual savings by autonomously managing complex workflows.

- Financial institutions must implement robust three-lines-of-defense frameworks covering model risk, operational security, and regulatory compliance to safely deploy agentic AI in sensitive banking environments.

- Leading banks are achieving 25-40% faster loan approvals and 45-65% reduction in manual trade finance processing.

- Successful implementation requires a phased 18-36 month roadmap progressing from governance foundations to controlled deployment and finally competitive differentiation.

What is agentic AI?

Agentic AI refers to artificial intelligence systems that can plan, execute, and adapt complex tasks with minimal human oversight—marking a step beyond traditional AI and generative chatbots. Unlike robotic process automation or conventional chatbots, these systems enable one or multiple agents to make decisions autonomously while still operating under human oversight.

They combine large language models, tool orchestration, and feedback mechanisms to pursue complex objectives across multiple systems while maintaining governance boundaries. Agentic systems are capable of adapting to new situations and processing both structured and unstructured data, making them far more versatile in dynamic environments.

Why does agentic AI matter for banking?

Agentic AI is already delivering measurable results across leading banks worldwide:

- Independent Bank (Michigan) reduced integration pipeline development time by 12× while detecting ATM fraud in real time.

- BNY Mellon uses Eliza, an orchestrator of 13 specialized agents, to give sales teams instant insights and speed up client service.

- Major UK Bank achieved a 35% drop in loan fraud by embedding AI agents into loan approval workflows.

- Bank of Singapore cut compliance drafting time by 20–50% with generative AI, without compromising regulatory accuracy.

Together, these cases show how agentic AI is moving beyond pilots into production, driving efficiency, resilience, and scale in banking operations.

Neontri’s banking AI delivery: What we’ve built

While industry examples like BNY Mellon’s Eliza and Bank of Singapore’s compliance automation illustrate where banking AI is heading, the following are systems Neontri has actually delivered:

- IKO Mobile Banking, PKO Bank Polski

Scale: 8 million active IKO applications, over 32 million daily app interactions, and 361 transfers per minute. IKO was also named the world’s best mobile banking app twice in a row by Retail Banker International.

What Neontri delivered: We co-created IKO for Poland’s largest bank, supporting the development and delivery of a high-scale mobile banking platform.

Why it matters for agentic AI: Operating at this scale requires the kind of infrastructure agentic AI depends on, including reliable data access, resilient orchestration, and real-time event handling.

- KIR PSD2 Hub

Scale: Connects 300+ Polish banks with third-party providers under open banking regulation.

What Neontri delivered: We worked with KIR to create the PSD2 hub, a regulated data-exchange infrastructure for Poland’s banking ecosystem.

Why it matters for agentic AI: Banking agents need secure, permissioned access to financial information. PSD2-style infrastructure gives them the compliant foundation required for many AI-driven financial workflows.

- Core banking middleware for Tier 1 Polish bank

Scale: Built for PKO Bank Polski, Data Hub offloads around 70 million records daily, supports around 10 million data retrievals per day, manages 26 TB of offloaded data, and runs with a 99.99% yearly SLA.

What Neontri delivered: Our experts built middleware that optimized API traffic and data retrieval between the core banking system and downstream applications.

Why it matters for agentic AI: Autonomous banking workflows need low-latency, reliable infrastructure, and Data Hub shows how legacy system constraints can be removed before production deployment.

- Visa partnership

Scale: Visa has worked with Neontri for over 10 years.

What Neontri delivered: Our long-term work with global payment clients reflects experience in secure integrations, regulated delivery, and high-reliability financial systems.

Why it matters for agentic AI: Regulated agentic AI requires more than model access. It needs security discipline, integration depth, compliance awareness, and delivery experience in environments where reliability and auditability are non-negotiable.

Benefits of agentic AI for decision makers

For banking leaders, the real significance lies in how these systems translate into measurable business outcomes:

- Competitive advantage

According to McKinsey’s recent Global Digital Banking Conference, “breakaway” banks in AI adoption have emerged. They are scaling their use of AI at double the rate of the average bank, creating significant competitive pressure.

Complementing this, BCG research highlights banking and fintech as the industries with the highest concentration of AI leaders. Nearly half of financial institutions already report regular use of advanced AI systems, and adoption of agentic architectures is accelerating as institutions seek differentiation through automation at scale.

- Regulatory readiness

The Digital Operational Resilience Act (DORA), effective since January 17, 2025, sets strict requirements for EU financial institutions to continuously monitor and control ICT systems, with ultimate accountability placed on management. Institutions must be able to log, classify, and report ICT-related incidents, including those linked to AI technologies.

Globally, similar pressures are emerging. In the U.S., the Federal Reserve and OCC have issued supervisory guidance on AI risk management, emphasizing transparency, explainability, and model governance. In Asia, the Monetary Authority of Singapore (MAS) has introduced the FEAT principles (Fairness, Ethics, Accountability, and Transparency) for AI in financial services.

Properly governed agentic AI systems provide the autonomous monitoring and automated response capabilities needed to meet these requirements, giving an edge to institutions with strong governance practices.

- Scalable efficiency

One global bank applied agentic AI to its KYC processes by deploying ten agent squads, each coordinating four to five AI agents. This shifted the model from periodic reviews to continuous, event-driven customer due diligence. For decision makers, the impact is clear: lower compliance costs, faster onboarding, and the ability to scale regulatory processes without proportional increases in headcount.

- Accelerated growth

In mortgage lending, agentic AI systems can autonomously orchestrate credit underwriting by pulling income, asset, and employment data from multiple sources, cross-validating it against regulatory requirements, and simulating borrower risk under different interest-rate scenarios.

This compresses approval cycles from weeks to days while enforcing consistent credit standards. In rate-sensitive markets where speed drives borrower choice, these capabilities translate directly into market share gains.

- Process optimization

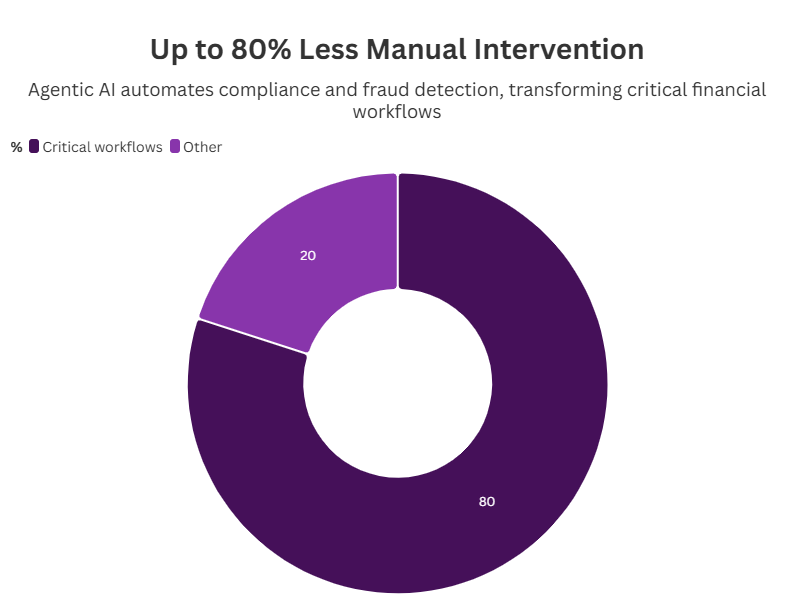

Industry data shows that 36% of financial services professionals have seen AI reduce annual costs by more than 10%. Agentic AI enhances this further by enabling real-time fraud detection and automating compliance checks, eliminating up to 80% of manual interventions in critical workflows. For executives, this means leaner operations with greater accuracy and lower error rates.

- Smarter risk management

Autonomous agentic systems provide continuous monitoring of exposures across credit, market, and liquidity risks. They dynamically adjust thresholds as conditions change—recalibrating models when volatility spikes, new counterparty data arrives, or regulations evolve. For decision makers, this ensures faster, more consistent risk assessment and more resilient decision-making under stress, strengthening both profitability and institutional stability.

Generative AI vs. agentic AI in banking: Decision framework

Generative AI generates outputs (text, code, documents) from prompts and is reviewed before action. Agentic AI executes multi-step tasks autonomously toward a defined goal within governance constraints. In banking, generative AI is production-ready for content tasks; agentic AI is moving from pilot to controlled production for workflow tasks like loan triage, AML investigation, and compliance drafting. The implementation difference: agentic systems require three-lines-of-defense governance, audit trails, and tool-use infrastructure that generative systems don’t.

| Dimension | Generative AI | Agentic AI |

|---|---|---|

| Primary capability | Content and output generation from a prompt | Multi-step task execution toward a defined goal |

| Decision authority | Human reviews every output before action | Autonomous within defined guardrails |

| Banking use case fit | Document drafting, customer communications, code review | Loan triage, AML investigation, treasury optimisation |

| Compliance posture | Output reviewed before any action is taken | Action governed by guardrails and full audit trail |

| Risk profile (regulated banking) | Lower; outputs are always reviewed | Higher; requires three-lines-of-defense framework |

| Implementation maturity in banking | Production-ready (2024 onwards) | Early production (2025–2026) |

| Typical ROI timeline | 6–12 months | 12–24 months |

| Required infrastructure | LLM API and retrieval layer | LLM + tool-use + state management + observability + governance |

| EU AI Act classification | Limited risk for most use cases | Limited to high risk depending on use case |

| Regulatory readiness | Lower documentation burden | Requires transparency, human oversight, and model documentation |

Is agentic AI secure enough for sensitive financial data?

Yes, but only with the right safeguards in place. In banking, deployments must follow the three-lines-of-defense framework, addressing risks at three levels: model performance, operational resilience, and regulatory or reputational exposure.

Model risk management

This area requires more than one-off reviews. AI systems need ongoing validation, explainability, and fairness checks to stay reliable and accountable:

- Validation protocols: Deploying generative and agentic AI requires continuous validation rather than periodic reviews. Institutions need real-time monitoring, statistical control limits, and automated drift detection across decision pathways. In high-stakes areas like credit underwriting or fraud detection, challenger models should benchmark performance and reveal hidden biases.

Equally important are transparency measures and strong safeguards for data privacy and ethics to keep autonomous systems auditable and compliant at scale.

- Explainability requirements: In highly regulated sectors like banking, transparency is critical—”If you have a model that is making decisions, it can’t be a black box”. Implement hierarchical explainability with high-level business logic accessible to senior management, detailed algorithmic explanations for risk management, and transaction-level audit trails for regulators.

- Bias and fairness monitoring: Deploy continuous bias detection across protected classes with automated alerts when decision patterns deviate from established fairness metrics, supporting enhanced risk assessment while maintaining compliance with fair lending requirements.

Operational risk framework

Managing operational risk in agentic AI means rethinking third-party oversight, strengthening cyber resilience, and ensuring business continuity in banking operations:

- Third-party risk management: The complexity of data sources used in AI requires robust governance and documentation to ensure data quality and provenance is appropriately monitored. Establish vendor risk assessments covering model development practices, data security protocols, business continuity planning, and regulatory compliance capabilities.

- Cyber and information security: Financial institutions worldwide are expected to establish structured frameworks for identifying, assessing, and mitigating ICT risks, including continuous monitoring and predefined risk response plans. Implement zero-trust architectures for AI systems with multi-factor authentication, encryption at rest and in transit, and network segmentation.

- Business continuity and operational resilience: Design graceful degradation procedures when AI agents fail, maintaining critical banking operations through manual override capabilities with defined recovery time objectives specifically for AI-dependent processes.

Regulatory and reputational risk

Compliance and trust are equally important: institutions must meet supervisory expectations while protecting customers and their confidence in AI-driven services:

- Supervisory engagement: Regulators expect financial institutions to clearly explain model outputs and effectively track and manage changes in AI performance and behavior. Proactively engage with primary regulators regarding agentic AI deployment plans, documenting governance frameworks and consumer protection measures.

- Consumer protection and fair banking: To uphold consumer protection and fair banking principles, institutions must ensure transparent disclosure of how AI influences decisions in areas such as credit, pricing, or fraud resolution. Clear escalation channels should be in place for AI-related complaints, allowing customers to challenge or appeal outcomes without friction. Equally critical is maintaining human-in-the-loop oversight for all consumer-facing decisions, so that autonomy doesn’t come at the expense of accountability.

Compliance framework: DORA, Basel III AI, and the Three-Lines-of-Defense for agentic systems

Agentic AI in banking must be governed as part of existing risk, resilience, and model-control frameworks. The key issue is not whether the system uses AI, but whether it can affect customers, transactions, regulated decisions, or critical operations.

DORA

DORA applies from 17 January 2025 and is central for agentic AI used in ICT-supported banking workflows. Banks should assess AI failures under their incident classification process where they affect service availability, data integrity, confidentiality, authenticity, customers, or critical functions. Agentic workflows also need documented fallback procedures, recovery objectives, and third-party exit plans, especially when they depend on a single LLM, cloud, or orchestration provider.

EU AI Act

Creditworthiness assessment and credit scoring are high-risk AI use cases under the EU AI Act. Fraud detection is not automatically high-risk under that category, although it remains subject to financial-services, data-protection, and operational-risk rules. For high-risk systems, banks need lifecycle documentation, human oversight, logging, monitoring, data governance, and clear accountability for decisions that affect customers.

Operational Risk and Basel III

There are no separate “Basel III AI provisions” for agentic AI. The safer framing is operational risk. If an agentic system in credit, treasury, fraud, onboarding, or payments fails and causes financial loss, customer harm, legal exposure, or service disruption, that failure becomes part of the bank’s operational-risk profile. AI failure scenarios should be included in control testing, resilience planning, and risk appetite discussions.

Three Lines of Defense

Line 1 owns the agentic workflow and its controls, including thresholds, approval gates, escalation rules, confidence limits, tool permissions, and action logs. Line 2 independently challenges the design, monitors risk, and has authority to pause or restrict the system. Line 3 audits whether the controls, oversight, logs, incidents, and remediation actions are reliable. A common governance weakness is placing design, risk approval, and assurance under the same technology team.

Model Risk Management

Agentic systems need broader validation than traditional predictive models. Review must cover model outputs, tool use, data access, action logging, escalation behavior, human override, and fallback procedures. Validation should be continuous where the system learns from feedback, changes behavior, or interacts with live operational data.

Banking-specific applications: Strategic use cases

Agentic AI use cases in banking vary significantly in maturity, complexity, and proven impact. The table reflects public evidence from bank disclosures, consulting research, and vendor case studies.

| Use case | Maturity in 2026 | Typical impact | Complexity | Evidence |

|---|---|---|---|---|

| AML transaction monitoring and investigation support | Production | 15–20% productivity uplift in assisted investigations | Medium | McKinsey |

| Credit review and memo generation | Controlled production | 20–60% productivity improvement in selected workflows | High | McKinsey, nCino |

| Customer service orchestration | Production | 40%+ automated interaction handling in mature deployments | Medium | Bank of America |

| Compliance documentation drafting | Production | Faster drafting and review, depending on workflow scope | Low–medium | PwC |

| Treasury cash forecasting and optimization | Pilot / early production | Better cash visibility and liquidity planning | High | BCG |

| Trade finance document processing | Production | 33–60% reduction in processing or turnaround time | Medium | BNP Paribas, Datamatics |

| KYC onboarding orchestration | Production / early agentic production | 30–50% faster onboarding in benchmark cases | High | McKinsey, Deloitte |

| Fraud investigation copilot | Controlled production | Faster triage and lower manual review effort | Medium | McKinsey |

| Wealth advisory copilot | Pilot / controlled deployment | Early deployments focused on advisor support, not autonomous advice | Very high | Morgan Stanley, Reuters, BCG |

From risk management to customer experience, the following use cases show where agentic AI delivers the most value in banking.

#1: Advanced credit risk management and assessment

Banks are using agentic AI to enhance fraud detection and adapt credit decisions in real time.

Dynamic portfolio optimization. Multi-agent monitors correlate device, behavior, and network patterns for real time fraud detection and anti money laundering alerts, automatically triaging cases and assembling dossiers for analysts. Autonomous systems continuously analyze customer data, transaction history, and market data to adjust credit limits and pricing in real-time during market volatility.

Cross-border trade finance. Agentic AI systems automatically verify complex documentation, monitor geopolitical risks affecting trade routes, and adjust financing terms based on evolving sanctions regimes. This reduces trade finance processing time from days to hours and ensures compliance with international banking regulations.

Example: JPMorgan Chase applies agentic AI to strengthen both fraud prevention and portfolio management. Its systems track device activity, customer behavior, and transaction patterns in real time to detect fraud and trigger anti–money laundering alerts, reducing false positives and accelerating investigations. At the same time, AI-driven portfolio tools adjust credit limits and pricing dynamically as market conditions shift. This helps the bank manage risk more effectively and deliver a better customer experience.

#2: Regulatory compliance automation

AI agents streamline compliance by correlating vast data sources and generating more accurate reports.

Anti-money laundering and sanctions. Financial institutions detect only about 2% of global financial crime flows, despite increasing spending by up to 10% a year between 2015 and 2022. Agentic AI systems correlate transaction patterns, customer behavior, and external risk indicators to generate comprehensive suspicious activity reports with complete supporting documentation, reducing false positive rates by 40-60%.

Global payment compliance. Autonomous systems automatically screen payments against sanctions lists, assess regulatory requirements across multiple jurisdictions, and route transactions through optimal correspondent banking relationships. They also generate complete audit trails for compliance teams.

Example: Sardine, an AI-powered fraud and compliance platform, helps banks and fintechs detect fraud, monitor transactions, and manage KYC/AML checks in real time. Its agentic AI agents cut false positives, automate regulatory reports, and streamline investigations under human oversight.

Transform your banking operations with agentic AI

Get expert guidance on implementing secure, compliant AI systems that deliver measurable ROI.

#3: Capital markets and treasury operations

Agentic AI enables real-time risk monitoring and smarter capital allocation in volatile markets.

Real-time market risk assessment. AI agents continuously analyze market data, portfolio positions, and correlation changes to provide dynamic risk metrics and hedge recommendations, enabling more responsive risk management and improved capital efficiency during market conditions volatility.

Regulatory capital optimization. Autonomous systems model regulatory capital requirements across multiple frameworks and recommend portfolio adjustments to optimize capital efficiency while maintaining regulatory buffers, supporting enhanced decision making processes.

Example: M&T Bank uses nCino’s continuous credit monitoring, an AI-driven system that delivers explainable, real-time insights on risk and capital allocation. In treasury operations, autonomous AI agents also model regulatory capital needs on an ongoing basis, suggesting portfolio adjustments that keep buffers safe while improving efficiency.

#4: Customer engagement

Enterprise-grade customer engagement through agentic AI systems transcends traditional chatbot implementations to create comprehensive relationship management platforms. Recent survey data suggests that more than 20% of cardholders didn’t redeem any rewards in the prior 12 months, with AI agents helping passive consumers by automatically directing spending to the best card in real time.

Proactive relationship management. Agents synthesize unstructured data (calls, chats) with structured ledgers to anticipate customer needs, personalize offers based on spending habits, and raise customer satisfaction while respecting controls. These intelligent systems identify optimal moments for financial guidance and service interventions based on comprehensive customer data analysis.

Omnichannel consistency. Banking agentic AI maintains context and personalization across all customer touchpoints—mobile applications, web portals, branch systems, and contact centers.

Complex query resolution. Advanced artificial intelligence systems handle sophisticated financial questions that require analysis across multiple accounts, products, and time periods. This reduces resolution time for complex inquiries and keeps human oversight for edge cases and exception handling.

Example: JPMorgan Chase has introduced agentic AI assistants and chatbots that can handle complex financial questions, give tailored responses, and even suggest savings strategies or flag fraud risks. These systems manage a high volume of interactions autonomously, cutting customer wait times by over 40% and boosting efficiency, while also supporting human agents with data-driven recommendations.

#5: Competitive intelligence and market positioning

Banks that meet the definition of digital trust returned 7.8 times greater CAGR from 2017 to 2024 than non-trusted banks, with only 18% of customers willing to shift away from a bank they trusted.

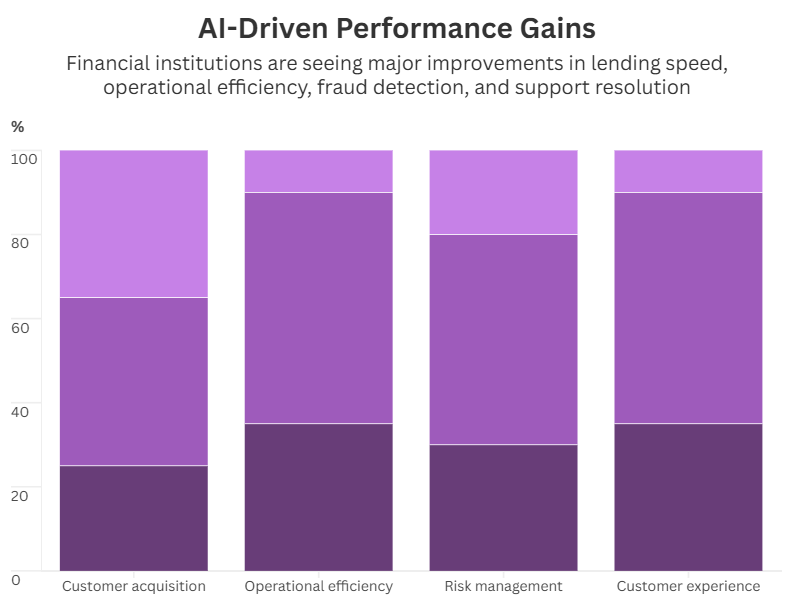

Leading regional banks implementing agentic AI report significant competitive advantages in key performance metrics:

- Customer acquisition: 25-40% improvement in approval-to-funding cycles for commercial lending.

- Operational efficiency: 45-65% reduction in manual processing for trade finance operations.

- Risk management: 30-50% improvement in fraud detection accuracy with fewer false positives.

- Customer experience: 35-55% faster resolution times for complex queries.

Barriers to adoption: Challenges and critical risks

Deploying agentic AI in banking isn’t just about technology. It also requires addressing organizational, cultural, and architectural challenges.

Organizational design challenges in deploying agentic AI

Financial institutions need to design a structure that oversees agentic AI risk without slowing down innovation, with organizations using centralized AI oversight committees in early stages and shifting control to subcommittees as applications mature.

Establish senior-level committees including CRO, CTO, CDO, and business line heads with explicit authority over AI deployment decisions and risk appetite setting.

Three lines of defense adaptation:

- Business units own AI system performance, customer outcomes, and initial risk monitoring.

- Risk management validates models, monitors regulatory compliance, and sets governance standards.

- Internal audit independently assesses AI governance effectiveness and compliance.

Risk appetite and policy framework

Defining clear boundaries ensures AI systems operate within acceptable risk levels.

AI-specific risk appetite. Banks might struggle to define how much risk from AI systems is acceptable, especially around accuracy, bias, and reliability.

How to overcome: Establish quantitative risk limits for performance (accuracy thresholds, bias metrics, complaint rates) alongside qualitative standards for transparency and human oversight.

Policy integration. A common challenge is that AI governance sits outside existing risk and compliance structures, creating inconsistencies and blind spots.

How to overcome: Integrate AI governance requirements into existing policies covering model risk management, vendor risk management, operational risk, and business continuity planning supporting comprehensive risk assessments.

Cultural transformation

Successful adoption depends on leadership alignment and workforce adaptation.

Leadership alignment. The next stage of genAI implementation will widen the gap between those who see this technology as a cost-saver versus those who want to embrace its full potential, with strategic mindset being key to achieving technological goals.

How to overcome: Ensure senior leadership demonstrates commitment to AI-driven revolution through resource allocation and performance incentives.

Employee engagement. Employees may feel uncertain or resistant when their roles shift from performing tasks to supervising autonomous AI systems. This change can create anxiety about job security and slow adoption.

How to overcome: Teams must adapt from manual control to supervise autonomous systems—moving from “doers” to “controllers”. This shift calls for training in oversight and exception triage. Implement comprehensive change management programs addressing job role evolution and career advancement opportunities.

Organizational design

AI shifts roles and responsibilities, requiring new job models and skill sets.

Role evolution. Traditional roles often don’t account for supervising or collaborating with AI agents, leaving gaps in oversight.

How to overcome: Redesign job descriptions and performance metrics to reflect new responsibilities in AI-augmented processes, establishing clear accountability for AI system oversight and performance management supporting operational efficiency.

Skills development. Employees may lack the knowledge and confidence to work with AI systems, which can slow adoption and increase risk.

How to overcome: Invest in developing AI literacy across business units, with specialized training for employees directly overseeing or managing AI agents.

Integration architecture

Robust integration and data architecture are essential for scalable, secure AI systems, with safeguards such as encryption, strict access controls, and continuous monitoring to protect sensitive data and maintain regulatory compliance.

API-first integration. Banks still rely on legacy connections like screen-scraping or batch transfers, which limit scalability and create security gaps.

How to overcome: Design integration architecture around robust APIs rather than screen-scraping or batch file transfers, ensuring scalability, security, and maintainability as AI capabilities expand across banking operations and third-part integrations.

Data architecture. Many financial institutions’ data governance controls don’t sufficiently address genAI, which relies heavily on combining public and private data.

How to overcome: Implement real-time data pipelines supporting autonomous decision making while maintaining data lineage, quality controls, and privacy protections.

Build vs. buy vs. partner: Decision framework for banking agentic AI

The build-buy-partner decision shapes speed, control, cost, and regulatory exposure. In banking, the right path depends on how differentiated the workflow is, how much regulated data it touches, and how much control the bank needs over governance, integration, and long-term ownership.

| Path | When it makes sense | Typical timeline | Investment profile | Risk profile |

|---|---|---|---|---|

| Buy – vendor SaaS | Standard workflows where speed matters more than differentiation; limited internal engineering capacity; existing fit with platforms such as nCino, Backbase, or Agentforce Financial Services | 6–12 months for configured rollout; longer if core-system integration is heavy | Recurring licensing plus implementation and integration costs | Lower delivery risk, but higher vendor lock-in, third-party dependency, and customization limits |

| Build – in-house team | Proprietary workflows where the AI capability itself creates competitive advantage; strong internal engineering, data, risk, and compliance capacity | 18–36 months | Highest upfront and ongoing investment; requires permanent product, engineering, MRM, data, and security capacity | Highest delivery risk, but maximum control, ownership, and flexibility |

| Partner – specialist engineering firm | Custom use cases not covered well by SaaS; need senior banking-AI talent without a long hiring cycle; clear scope and success criteria; client wants ownership of the output | 9–18 months | Project-based investment depending on scope, integrations, and governance requirements | Balanced model: faster than full in-house build, more control than SaaS, but requires strong client governance and knowledge transfer |

Implementation roadmap: 18–36 months from foundation to production agentic AI

Agentic AI adoption in banking should move in stages. Each phase builds the data, governance, risk controls, and operating evidence needed to move from a controlled first use case to production-scale workflows.

Months 0–6: Foundation

- Data layer audit. Identify the data sources each use case needs and assess latency, quality, lineage, and access controls. If core systems can’t support fast read access, resolve the middleware or data platform architecture first.

- Governance framework. Establish ownership across risk, technology, data, and business teams. Define risk appetite through accuracy thresholds, escalation rules, transaction limits, and decisions excluded from autonomous scope.

- Model risk management policy. Update MRM for agentic AI, including continuous validation, tool-use scope, data integrations, and human override testing.

- Three-lines-of-defense org design. Define Line 1, Line 2, and Line 3 responsibilities before the first agent is built.

Months 6–12: First agent

- Single use case. Deploy one narrow use case, typically AML investigation drafting or compliance document generation.

- Heavy human-in-loop. Keep human review for all material outputs before they reach customers, regulators, or external systems.

- Full audit trail. Log every action, tool call, escalation, decision, and human override.

- Evidence base. Use the pilot to prove performance, reliability, and control effectiveness.

Months 12–18: Production deployment

- First agent in production. Move the first agent into production once live performance data supports it.

- Reduced human-in-loop. Lower review levels only when observed accuracy justifies it and risk teams approve.

- Second agent in pilot. Start a second controlled use case using the same governance and audit infrastructure.

- Performance dashboards. Track accuracy, escalation rates, override patterns, drift signals, and operational impact.

Months 18–24: Multi-agent orchestration

- Two or more agents collaborating. A typical configuration is an AML monitoring agent that identifies and prioritises cases, paired with an investigation-drafting agent that prepares initial SAR documentation for analyst review.

- Orchestration layer. Use LangGraph, a custom orchestration layer built on existing middleware, or a vendor platform. The orchestration layer falls within model risk management scope, and SR 11-7 and EBA guidelines apply to the system as a whole, not just individual agents.

- Control requirements. Evaluate orchestration based on auditability, failure handling, access control, and the ability to pause individual agents.

- Separate audit trails. Keep clear logs for each agent and for the orchestration layer itself.

Months 24–36: Competitive differentiation

- Material business workflows. Scale agentic systems into workflows that affect real operational performance.

- Continuous model risk monitoring. Make monitoring, validation, drift detection, and performance reviews part of normal operations.

- Regulatory reporting cadence. Establish regular reporting and evidence packs for risk, audit, and supervisory review.

- Operational advantage that competitors cannot shortcut. Governance maturity, labelled data, validated models, and two years of audit evidence take time to build and cannot be purchased off the shelf.

Choosing the right vendor for AI deployment

Selecting the right partners is as critical as the internal roadmap. Vendor evaluation should balance technical, commercial, and strategic considerations.

- Technology assessment: Evaluate vendors based on model performance, integration capabilities, security architecture, and regulatory compliance support. Vendors should be evaluated through portfolio reviews, case studies, and product demonstrations, as well as by requesting technical documentation and holding introductory workshops to assess transparency and depth of expertise.

- Commercial structure: Negotiate contracts with clear performance guarantees, liability provisions, and intellectual property protections.

- Strategic partnership potential: Consider long-term value beyond initial implementation, such as joint innovation, market intelligence sharing, and competitive differentiation. This can include joint pilots, innovation labs, and regular strategy workshops to align roadmaps and explore co-development.

Neontri’s recommendation: When evaluating vendors, look beyond the immediate project scope and assess cultural alignment, communication style, and responsiveness. A strong partner will demonstrate not only technical competence but also the flexibility to adapt to evolving regulatory, operational, and strategic priorities. Building trust and ensuring clear escalation paths are equally important to maintain resilience throughout long-term collaboration.

Don’t let competitors gain the agentic AI advantage

Partner with proven specialists to accelerate your digital transformation and capture market share.

ROI-driven financial modeling for agentic AI in banking

For executive approval of large-scale AI initiatives, banks need a structured approach to rigorously quantify costs, benefits, and risks.

Investment analysis and returns

Before committing resources, banks should carefully weigh the full cost of AI implementation against the potential efficiency gains and revenue growth:

- Total cost of ownership: Include technology licensing, infrastructure costs, organizational change management, ongoing monitoring, and vendor management expenses in ROI calculations.

- Benefit quantification: Leading institutions report 35-55% cost reduction in middle-office operations through intelligent automation, with payback periods of 18-24 months for well-architected implementations. Model benefits across operational costs reduction, revenue enhancement through improved customer acquisition, and risk reduction through lower operational losses.

- Risk-adjusted returns: Apply appropriate discount rates reflecting implementation risk and competitive response risk to provide conservative ROI estimates supporting investment decisions.

Success metrics framework

To measure real value, organizations must track operational, financial, and risk-related metrics consistently over time:

- Operational metrics: Processing time reduction, human error rate improvement, straight-through processing percentage, and customer satisfaction scores supporting operational efficiency measurement.

- Financial metrics: Cost per transaction, revenue per customer, return on AI investment, and efficiency ratio improvement demonstrating value creation from agentic AI in financial services.

- Risk metrics: Model performance stability, bias detection alerts, regulatory examination findings, and operational risk incidents supporting comprehensive risk assessments.

Trends of agentic AI in banking industry

The banking sector is undergoing rapid change as agentic AI evolves technologically and regulators adapt, reshaping how institutions operate and stay compliant. And here are the key trends driving this transformation:

| Trend | Description |

|---|---|

| From copilots to co-workers | Agents advance from drafting to doing—invoking systems, reconciling breaks, and closing cases with audit trails, with multi-agent collaboration enabling specialized agents to coordinate via orchestration layers. |

| Enterprise-grade multimodality | Voice, images, and PDFs flow into unified ai systems for richer insights and fewer handoffs, supporting comprehensive document processing and data processing capabilities. |

| Reg-tech convergence | Continuous controls enable automated compliance automation, traceability, and embedded policy checks in every workflow supporting regulatory compliance. |

| Proactive compliance | The European Supervisory Authorities are preparing for DORA application by focusing on policy implementation and setting up oversight frameworks over critical third-party providers. Anticipate regulatory developments in AI governance to maintain compliance advantages over reactive competitors. |

| International considerations | Design AI governance frameworks accommodating multiple regulatory jurisdictions as business operations expand globally, supporting international financial institutions. |

Strategic recommendations for banking leadership

To capture the full value of agentic AI, banking leaders should focus on governance, risk, differentiation, internal capabilities, and regulatory alignment.

- Establish AI governance as board-level priority

Companies need comprehensive risk scorecards and focus on four key sets of controls to find the right balance between pursuing innovation and mitigating risk. Integrate agentic AI strategy into board-level risk and technology discussions with regular reporting on progress and risk metrics.

- Invest in comprehensive risk management

While analytical AI and gen AI boost compliance efficiency, they often do not lead to bottom-line benefits at scale without fundamentally transforming effectiveness and efficiency. Implement robust risk management frameworks before deploying production systems.

- Focus on competitive differentiation

McKinsey predicted that the value at stake from AI can reach $15 trillion over the next decade, with agentic AI expected to provide nearly a third of that value. Select use cases creating sustainable competitive advantages rather than pursuing generic efficiency improvements.

- Build organizational capabilities

Invest in developing internal AI expertise and governance capabilities rather than relying solely on vendor relationships, supporting long-term competitive positioning.

- Maintain regulatory leadership

Engage proactively with regulators and industry groups—such as the European Banking Authority (EBA), European Central Bank (ECB), U.S. Federal Reserve, Office of the Comptroller of the Currency (OCC), and global forums like the Basel Committee or the Financial Stability Board (FSB). Active participation helps banks influence standards, anticipate regulatory shifts, and demonstrate thought leadership in financial services agentic AI.

Unlock AI’s full potential in banking with Neontri

Planning agentic AI deployment in a regulated bank?

With over 400 successful projects delivered, Neontri has built AI and data infrastructure for European Tier 1 retail banks for over a decade, including:

- IKO (ranked the world’s number one mobile banking app with 8 million users).

- The KIR PSD2 hub connecting 300 Polish banks.

- A 10-year partnership with Visa spanning multiple banking integration programmes.

We don’t sell agentic AI as a product. We deliver custom implementations with DORA-ready governance, three-lines-of-defense model risk frameworks, and integration into the legacy core systems most agentic AI vendors quietly avoid.

Book a 60-minute scoping call with a banking AI engineer. We’ll review your current data and AI maturity, identify the one or two use cases with the strongest ROI and lowest regulatory risk for your institution, and provide a written implementation roadmap.

Conclusion

Agentic AI in banking represents a fundamental transformation moving institutions from reactive service delivery to proactive relationship management and risk mitigation. Success requires sophisticated governance, comprehensive risk management, and strategic implementation balancing innovation with regulatory compliance and operational resilience.

FAQ

What is the strategic impact of agentic AI on banks?

Agentic AI enables autonomous decision-making and continuous learning, transforming banks from reactive to proactive organizations. It drives operational efficiency, enhances personalization, and supports scalable growth by automating complex tasks across front and back offices.

Why is proactive risk management with agentic AI crucial for financial stability?

Agentic AI continuously monitors transactions and market data to detect risks like fraud or credit issues early, allowing banks to act before small problems escalate. This real-time risk assessment strengthens financial stability and regulatory compliance.

How quickly can a bank see ROI from agentic AI investments?

Early benefits appear in operational efficiency improvements within 18-24 months, while longer-term value emerges through enhanced customer relationships and competitive positioning. Conservative financial models should assume 24-36 month payback periods accounting for implementation complexity.

Can agentic AI integrate with our legacy core banking systems?

Yes, agentic AI solutions can be layered on top of existing systems to enhance workflows without full system replacement. They act as intelligent intermediaries, coordinating data and actions across legacy platforms.

How can agentic AI support our digital transformation strategy?

Agentic AI accelerates digital transformation by automating routine tasks, enabling hyperpersonalized customer experiences, and fostering agile decision-making. It helps banks move from manual processes to intelligent, adaptive operations aligned with strategic goals.

What is the difference between generative AI and agentic AI in banking?

Generative AI creates outputs, such as summaries, reports, responses, or code, from a prompt. Agentic AI goes further: it plans and executes multi-step workflows by using tools, APIs, and data sources within defined guardrails. In banking, the distinction matters because the EU AI Act sets different documentation, oversight, and audit requirements based on an AI system’s role and autonomy. Start with generative AI to build credibility and governance, then move to agentic AI architecture for high-volume, auditable workflows where analyst time is the main constraint.

What is the realistic ROI of agentic AI for a Tier 2 retail bank?

The most realistic first-year ROI comes from controlled back-office use cases such as AML case preparation, KYC support, compliance drafting, and document review. A conservative planning range is 20–35% reduction in analyst time, with payback often taking 18–30 months. Lending use cases may shorten decision cycles, but they usually need heavier model-risk, fairness, and governance controls.

How does DORA apply to agentic AI deployments?

DORA applies when agentic AI depends on, affects, or disrupts ICT systems, data integrity, service availability, or critical outsourced technology. It has applied since 17 January 2025 and covers ICT risk management, incident classification and reporting, operational resilience testing, and ICT third-party risk. AI failures should be assessed under DORA when they create an ICT-related incident, not simply because an AI output is wrong.

What is the three-lines-of-defense framework for agentic AI?

Line 1 covers the controls built into the agent, such as transaction limits, escalation rules, confidence thresholds, and full action logs. Line 2 is the independent risk function that checks model performance, reviews escalated cases for patterns, and can suspend the agent if needed. Line 3 is an internal audit, which confirms that Line 1 controls work as intended and Line 2 remains independent. The main governance failure is putting all three lines under one technology team. Regulators look for this in banking AI reviews and often treat it as a serious weakness.

Should banks build, buy, or partner for agentic AI?

Banks should buy vendor SaaS for standard needs, fast rollout, and limited tailoring. They should build in-house when agentic AI creates a real competitive advantage and the bank has a strong engineering team. Partnering with a specialist firm works best when the scope is custom but well-defined, banking-AI expertise is needed, and delivery accountability matters. The main hidden costs are extra vendor changes, governance, integration, and compliance.

How long does it take to deploy agentic AI in a regulated bank?

A realistic path to first controlled production is about 12 months. The first 3–6 months should cover data readiness, governance, model-risk policy, controls, and fallback processes. Multi-use-case scale usually takes 24–36 months because each workflow needs testing, audit trails, ownership, and regulatory review.

What use cases are actually in production at major banks in 2026, versus pilot or PR?

At scale: AI assistants, internal copilots, document processing, fraud analytics, customer-service support, and compliance/documentation workflows. Bank of America reports more than 3.2 billion Erica interactions, while JPMorgan has publicly discussed hundreds of AI opportunities and productivity gains from internal AI tools. More autonomous financial-crime agents are moving into early deployments in 2026 rather than being proven across the whole sector.

How does the EU AI Act classify agentic AI in banking?

The EU AI Act doesn’t classify a system as high-risk simply because it is agentic. Creditworthiness assessment and credit scoring for natural persons are high-risk use cases, while financial fraud detection is specifically excluded from that credit-scoring category. Most Annex III high-risk rules start applying on 2 August 2026, subject to the Digital Omnibus proposal.

What data infrastructure is required before agentic AI is viable in banking?

Agentic AI needs fast access to customer, transaction, and product data, usually through a modern data platform or middleware that protects the core banking system. It also needs real-time event pipelines and clear data lineage with quality controls across every source the agent uses. Without this, decisions can’t be audited and model risk management can’t work. Banks should build this foundation before the first agentic AI deployment, not alongside it.

How do you handle model risk management for autonomous agents?

Agentic model risk management must cover the full workflow, not just the LLM. Banks need continuous monitoring, governed tool and data integrations, prompt and output controls, and tested human override under real operating conditions. SR 26-2 in the US and EBA guidance in the EU both reinforce the need for risk-based governance, validation, and evidence that controls work in practice.