When an e-commerce business experiences rapid growth, whether triggered by a viral marketing campaign, a seasonal peak event like Black Friday, or a strategic international expansion, the pressure on the underlying platform rises instantly. In these high-stakes moments, every millisecond matters, and the underlying architecture becomes the silent backbone that either sustains momentum or magnifies vulnerability. Its ability to scale and perform flawlessly becomes the dividing line between capturing market share and suffering catastrophic reputational damage.

This article explores the architectural paradigms, optimization techniques, and strategic decisions required to master e-commerce scalability. It evaluates the shift from monolithic legacy systems to composable, cloud-native architectures and analyzes the quantifiable impact of performance on the bottom line.

Key takeaways:

- Scalability measures a system’s capacity to handle growth efficiently, while performance measures how fast the system responds at any given moment – both are critical but require different architectural solutions.

- Scalability and performance directly impact revenue, with research showing that a 0.1-second improvement in load times can increase conversions by 8.4%.

- Modern e-commerce platforms benefit from architectural patterns like headless commerce, serverless computing, and horizontal scaling to handle traffic spikes and support omnichannel experiences without degrading user experience.

To build a successful e-commerce platform, stakeholders must start by clearly separating the closely related – yet fundamentally different – concepts of scalability and performance. Although these terms are frequently blended together in high-level discussions, they point to distinct engineering challenges that demand tailored architectural solutions. Understanding this difference early on ensures that teams invest in the right capabilities, build more resilient systems, and avoid costly redesigns as user demand grows.

E-commerce scalability: Capacity to expand

Scalability is the measure of a system’s capacity to accommodate growth. It is not merely about handling more traffic, more orders, more products – but doing it efficiently. It reflects how gracefully a platform can absorb business expansion without degrading user experience or inflating operational costs.

A truly scalable e-commerce platform follows a linear or near-linear cost curve: if the resource input doubles, the system’s capacity to handle load should also double. When a platform requires disproportionately more infrastructure, engineering effort, or operational overhead to maintain performance at higher volumes, the architecture reveals a fundamental lack of scalability.

The economic implications of scalability failures are staggering. According to a Black Friday/Cyber Monday report from Noibu, small degradations and friction in “high-intent moments”, such as site search, product discovery, checkout flow, during peak load periods contributed to as much as $305 million in lost revenue opportunities.

At the same time, retail companies lose roughly $2.6 billion per year globally because of slow page load times, often exacerbated by traffic surges and insufficient capacity planning. These figures underscore a critical reality: scalability is not merely an IT concern; it is a board-level financial risk.

This is why addressing the unique friction points in international e-commerce checkout flows is critical to preventing significant revenue leakage beyond just performance issues.

Vertical vs. horizontal scaling

As businesses evaluate how to expand their platform capacity, one of the first architectural decisions they face is choosing the right scaling strategy. At the core of this decision is a fundamental dichotomy: whether to increase the power of individual machines (vertical scaling) or to distribute the workload across many (horizontal scaling).

- Vertical scaling

Scale-up approach involves adding more power to a single server – upgrading the CPU, adding more RAM, or increasing storage capacity. Historically, this was the path of least resistance as it required minimal changes to the application code.

However, vertical scaling has a finite ceiling determined by physics and economics. For example, upgrading from a 32-core to a 64-core server may deliver only marginal performance gains while doubling the price. At extreme levels, specialized “monster” machines not only become prohibitively expensive but also introduce single points of failure, where one hardware fault can bring the entire platform offline.

To counteract these inherent limitations and ensure long-term agility, a strategic shift towards meeting composable commerce requirements allows platforms to evolve without constant, costly overhauls.

- Horizontal scaling

Scale-out involves adding more machines to the pool of resources. Instead of one supercomputer, the workload is distributed across multiple smaller commodity servers. This approach offers virtually unlimited growth potential and superior fault tolerance. If one server fails, traffic is simply routed to the others. In modern ecommerce architectures, scaling microservices is a common approach to handling increased traffic and maintaining system performance.

Functional vs. transactional scalability

Beyond hardware, scalability applies to the software architecture itself. A robust e-commerce site must platform must grow along two distinct dimensions address two distinct dimension:

- Transactional scalability – the ability to process more units of work, such as orders, checkout sessions, search queries, per second. This is often the focus during events like Black Friday.

- Functional scalability – the ability to enhance the system with new requirements without destabilizing the core platform. This includes adding new payment gateways, integrating complex supply chain management systems, or deploying new marketing features as new commercial or operational needs emerge.

E-commerce businesses that successfully balance both functional and transactional scalability capabilities see better performance during peak demand periods compared to their peers. This balance ensures that the business can innovate without crashing the site during a high-velocity sales event.

E-commerce performance: User-centric metric

Performance is the snapshot of efficiency at a specific moment. It quantifies how fast a page loads or how quickly a database query returns for a single user. It encompasses the measurable aspects of user experience across the entire system architecture.

Core web vitals

Google’s Core Web Vitals have standardized performance measurement, linking it directly to search engine optimization (SEO) and user experience (UX). The critical metrics include Largest Contentful Paint (LCP), Interaction to Next Paint (INP), and Cumulative Layout Shift (CLS). The correlation between these metrics and revenue is indisputable.

Research by Portent found that e-commerce sites loading in 1 second have conversion rates 3x higher than those loading in 5 seconds. Furthermore, a seminal study by Deloitte and Google, “Milliseconds Make Millions,” analyzed mobile site data across 37 brands and found that a mere 0.1-second improvement in load times resulted in an 8.4% increase in conversions for retail sites and a 9.2% increase in average order value (AOV).

Backend performance components

While frontend metrics track what the user sees, backend performance dictates the speed at which data is delivered.

- Server response time: Amazon found that every 100ms of latency cost them 1% in sales.

- Database response time: In e-commerce systems, the database is frequently the primary performance bottleneck. Well-optimized queries, especially those powering product catalog lookups, should complete in under 50 ms to maintain a responsive user experience.

- API latency: Modern e-commerce relies on APIs for pricing, inventory, and recommendations. To keep page rendering fluid and avoid cascading delays, RESTful API responses should ideally remain below 200 ms.

- Search query speed: Search represents one of the highest-intent actions in the user journey. According to Algolia, search latency exceeding 500 ms can reduce conversion rates by 20%, highlighting how even small delays directly affect revenue.

Key scalability challenges in modern e-commerce

Scaling an e-commerce platform involves solving complex engineering problems that arise when thousands of users attempt to access and modify the same data simultaneously. Addressing these issues requires a mix of careful architecture, robust observability, and automated runbooks so that the platform degrades predictably and recovers quickly under stress. However, many ecommerce challenges discussed here are rooted in broader principles of software scalability.

Sudden traffic spikes

Events like Black Friday or influencer-driven flash sales can generate traffic surges of 300% to 1000% within minutes. Traditional static infrastructure cannot provision resources fast enough to absorb this shockwave, leading to 503 errors and downtime. This “thundering herd” problem overwhelms databases as thousands of processes compete for limited connections.

Solution architecture:

- Predictive auto-scaling: utilizing machine learning (ML) algorithms to analyze historical traffic patterns and pre-provision server instances before the spike hits.

- Cache warming: pre-loading caches with static assets and frequently accessed database queries 24-48 hours before a known event.

- Circuit breakers: configuring the system to degrade gracefully. If the recommendation engine fails under load, the product page should still render without recommendations rather than crashing entirely.

Database bottlenecks

The database is the “source of truth” for the e-commerce store. During high traffic, read/write requests, particularly for inventory counts, cause locking. New Relic data suggests that database bottlenecks are responsible for 67% of e-commerce performance issues during peak periods.

Solution framework:

- Read replicas: directing all browsing traffic (catalog views) to read-only database copies, leaving the primary database free to handle write-heavy transactions like checkout.

- Database sharding: splitting the database horizontally. For example, customers A-M might be on one database server, and N-Z on another, effectively cutting the load on each database in half.

- CockroachDB/Distributed SQL: utilizing modern distributed SQL databases like CockroachDB, which can scale writes horizontally across multiple regions while maintaining strong consistency, crucial for real-time inventory management.

Payment gateway overload

Payment processing represents a synchronous external dependency, meaning the checkout flow cannot proceed until the gateway responds. When the provider becomes overwhelmed, the entire checkout experience stalls, creating a cascade of abandoned sessions. Industry analyses indicate that payment failures during high-traffic surges contribute to more than $4.2 billion in annual losses, underscoring how fragile this single dependency can be for revenue-critical flows.

Resilience strategy:

- Gateway failover: configuring the system to switch automatically to a backup payment provider if the primary one times out.

- Asynchronous processing: using message queues to decouple the user’s “place order” click from the actual payment processing, allowing the user to see an “order received” screen immediately while the payment processes in the background.

How scalability and performance impact revenue

The correlation between technical performance and financial success is one of the most well-documented relationships in the digital economy. It is not merely about user convenience; it is about revenue preservation and acquisition efficiency.

Conversion rate optimization through speed

Speed induces a psychological state of confidence and flow in the user. When a site responds instantly, the user perceives the brand as reliable and professional. Conversely, lag induces anxiety and frustration, prompting users to abandon the funnel. The data is clear: delays kill conversion.

| Metric | Improvement scenario | Revenue impact |

|---|---|---|

| Mobile load time | 0.1s improvement | +8.4% retail conversions |

| Average order value | 0.1s improvement | +9.2% increase in AOV |

| Bounce rate | Load time increases 1s to 3s | +32% increase in bounce rate |

| Customer loyalty | Poor experience | 32% of users abandon brand forever |

High cost of downtime

Downtime is the most extreme form of poor performance. The cost is calculated not just in lost sales during the outage, but in the recovery costs and long-term churn. For a retailer generating $50 million annually, a single hour of downtime during a peak event like Black Friday (where traffic can be 8x normal levels) can cost upwards of $187,000 when factoring in lost revenue, recovery engineering costs, and customer lifetime value loss. The Baymard Institute notes that site crashes and errors account for approximately 15% of all cart abandonments, a statistic that represents billions in uncaptured revenue globally.

Architecting for scale: Industry best practices

Achieving top-tier scalability requires adopting modern architectural patterns that prioritize flexibility, decoupling, and cloud-native principles.

Headless commerce

Headless commerce represents a paradigm shift in which the frontend presentation layer (the “head”) is decoupled from the backend commerce engine (the “body”). This architectural separation enables far greater flexibility, faster iteration cycles, and independent scaling of both layers.

In a headless setup, the backend exposes commerce capabilities through APIs, while the frontend is built using modern frameworks such as React or Vue.js. This offers several benefits:

- Performance gains. Companies adopting headless commerce report a 42% increase in conversion rates due to improved user experience and speed.

- Speed to market. By allowing frontend teams to deploy independently of backend release cycles, headless architectures can reduce time-to-market for new features by as much as 50%.

- Omnichannel readiness: Because all channels consume the same API layer, a single backend can support diverse touchpoints without duplicating business logic or infrastructure.

The adoption of this architectural approach is accelerating rapidly, with 73% of businesses currently using it. The global market for headless architecture is projected to grow at a 30.1% CAGR, surpassing $13 billion by 2028.

Serverless computing

Serverless computing (e.g., AWS Lambda, Google Cloud Functions) allows developers to execute code without provisioning, configuring, or maintaining servers. The cloud provider manages all underlying infrastructure and scaling, with costs accruing only for actual compute time. This model aligns naturally with the bursty, event-driven patterns of e-commerce workloads.

Examples:

- Image processing. Edmunds leveraged serverless computing to streamline image handling, reducing infrastructure costs by $100,000 in the first year and cutting processing time from months to days. Here is how it works: when images are uploaded to an S3 bucket, a Lambda function automatically resizes them. This system scales to zero when idle and to infinity during bulk uploads.

- Dynamic workloads, Joot used serverless architecture to save 70% in server costs by auto-scaling their media processing infrastructure based on demand rather than provisioning for peak load.

- Financial validation. FINRA used AWS Lambda to perform half a trillion data validations daily, reducing costs by 50% compared to traditional server-based validation.

Progressive web apps (PWA)

Progressive web apps (PWAs) bridge the gap between mobile websites and native apps, utilizing modern web capabilities to deliver app-like experiences. They leverage Service Workers to cache assets and API responses, allowing for instant loading even on poor networks.

Examples:

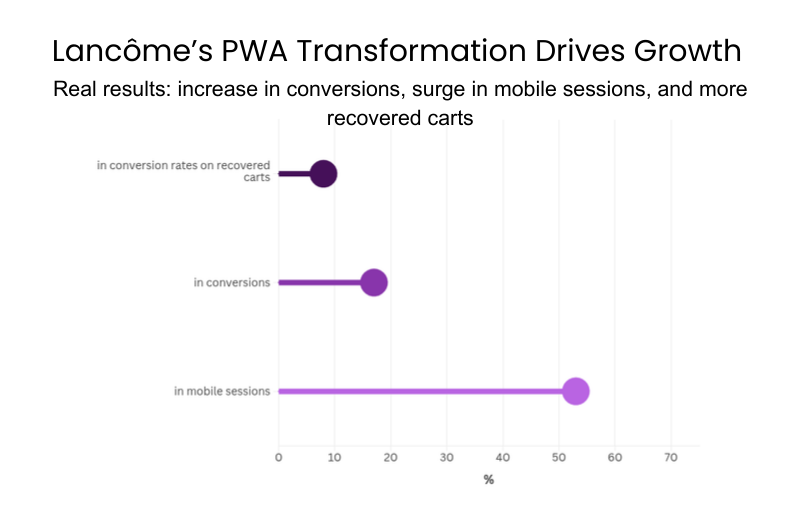

- Lancôme. By rebuilding their mobile site as a PWA, Lancôme saw a 17% increase in conversions, a 53% increase in mobile sessions, and an 8% increase in conversion rates on recovered carts via push notifications.

- AliExpress. After implementing a PWA, AliExpress reported a 104% increase in conversion rates for new users across all browsers.

Supply chain management integration

In modern e-commerce, inventory is a dynamic data point continuously shared across the storefront, warehouse management systems, and logistics providers. A common challenge is “ghost inventory,” where products are sold online despite being out of stock due to delays or inconsistencies in data synchronization. Such discrepancies are a significant driver of customer frustration and churn, highlighting the critical need for real-time, integrated supply chain systems built on two key principles:

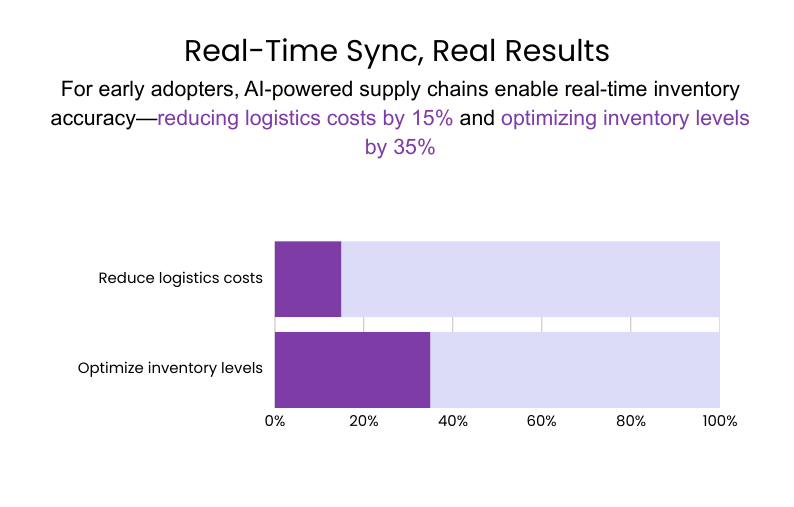

- Real-time synchronization. Integrating real-time inventory databases is essential for accuracy. According to McKinsey, early adopters of AI-enabled supply chain management can reduce logistics costs by 15% and optimize inventory levels by 35%.

- Event-driven updates. Rather than relying on slow, periodic polling of the warehouse management system, modern architectures should employ event-driven frameworks (such as Kafka) to push inventory updates instantly to the e-commerce database, ensuring the storefront always reflects accurate stock levels.

Performance optimization techniques

Optimizing performance is critical for e-commerce platforms, as faster pages directly impact conversion, user satisfaction, and revenue. Effective optimization spans multiple layers – from frontend assets to backend databases – ensuring that every interaction is as responsive as possible.

Image optimization

Images often constitute the largest portion of a page’s payload, making them a prime target for optimization.

- Next-gen formats. Serving images in modern formats like WebP or AVIF instead of JPEG can reduce file sizes by 25-50% without perceptible quality loss.

- Adaptive serving. Leveraging the <picture> element allows platforms to serve smaller images to mobile devices and higher-resolution versions to desktop or retina displays, preventing users from downloading unnecessary bytes.

Code splitting

Modern JavaScript applications can become bloated, delaying the time to interactive (TTI).

- Route-based splitting. Only load the JavaScript needed for the current page. For example, the product page code is not loaded when the user is on the homepage.

- Tree shaking. Remove unused code from the production build. This can reduce TTI by over 60%.

Database indexing

A database without proper indexing is akin to a library without a catalog; every query requires scanning all records, slowing response times.

- Indexing strategy. Create indexes on frequently queried columns, such as product_name or category_id, to reduce query times from seconds to milliseconds.

- Query analysis. Tools like EXPLAIN ANALYZE in PostgreSQL help identify slow queries, allowing developers to optimize them proactively before they become performance bottlenecks.

Tracking metrics that matter: Essential KPIs for scalable growth

Scaling effectively requires measuring what truly influences performance, customer experience, and business outcomes. A robust KPI framework should capture both technical efficiency and commercial impact, ensuring that growth does not come at the cost of stability or profitability. The following metrics provide a comprehensive, 360-degree view of system health.

Technical performance metrics

Technical KPIs reveal how well the platform responds under real-world conditions. They help identify bottlenecks, monitor stability, and ensure that infrastructure, code, and data systems scale seamlessly as traffic grows.

| Metric | Target | Industry average | Revenue impact |

|---|---|---|---|

| Page load time | < 2.5s | 4.2s | 7% conversion loss per second delay |

| Time to interactive | < 3.5s | 5.8s | 11% bounce rate increase per second |

| Server response time | < 200ms | 450ms | 1% revenue loss per 100ms latency |

| API latency | < 500ms | 800ms | 0.5% cart abandonment per 100ms |

| Error rate | < 0.1% | 2.3% | 3x impact on customer retention |

Business performance metrics

Business KPIs connect platform performance to commercial outcomes. They highlight how speed, reliability, and user experience influence conversions, loyalty, and overall revenue.

By tracking these indicators, teams can ensure that technical improvements translate into measurable business value:

- Cart abandonment rate. Indicates the percentage of users who add items but do not purchase. The global average is approximately 70.19%. Reducing this by even a fraction through performance improvements yields significant revenue.

- Repeat purchase rate. Reflects how reliability and experience influence customer loyalty. Strongly affected by inventory accuracy and fulfillment speed.

- System uptime. The percentage of time the site is available. A typical target is 99.95%, which translates to roughly 4.38 hours of allowable downtime per year.

- Infrastructure cost per transaction: A vital efficiency metric. As the business grows, this cost should decrease due to economies of scale and optimization.

Skeptic in the room: Separating scalability myths from reality

In an era when vendors promote “hyperscale everything,” it’s easy for teams to feel pressured to adopt architectures designed for unicorns rather than real-world businesses. A pragmatic technology leader must deliberately separate engineering ambition from commercial necessity. Healthy skepticism isn’t resistance to progress – it’s a mechanism for avoiding waste, over-engineering, and complexity traps.

Scalability is expensive and overhyped

Argument: Cloud-native systems, Kubernetes clusters, and microservices come with heavy operational overhead. Cloud bills climb unpredictably, and maintaining distributed systems requires costly, specialized engineering talent.

Reality: This is a valid concern. Flexera’s 2024 State of the Cloud Report indicates that 82% of enterprises exceed their cloud budgets, underscoring how easy it is for spending to drift without rigorous cost governance.

Balance: Scalability must be matched to revenue. “FinOps” (Financial Operations) practices are essential to monitor cloud waste. A business with 1,000 daily visitors does not require the same architecture as Netflix.

Not every business needs a full cloud-native architecture

Argument: A well-optimized “modular monolith” can handle substantial traffic. Shopify’s core platform is famously monolithic at its foundation. Distributed systems introduce network latency, cross-service debugging challenges, and operational overhead that simply do not exist in a monolith.

Reality: Premature decomposition often reduces development velocity due to increased coordination, duplicated service logic, and the burden of observability tooling. Many companies discover that the monolith was not the bottleneck – the database, caching layer, or frontend bundle was. Without clear evidence of architectural strain, microservices become an architectural tax rather than a performance enabler.

Balance: Begin with a modular, cleanly structured monolith. Extract only the services that demonstrably strain the system, such as search, checkout, or inventory, following a deliberate, data-driven Strangler-Fig pattern.

Autoscaling solves all performance problems

Argument: Autoscaling is often portrayed as a magic switch that instantly resolves traffic spikes. Many teams believe that as soon as the load increases, the cloud will automatically provision more compute and everything will continue running smoothly.

Reality: Autoscaling reacts after pressure is detected, not before. If databases, third-party APIs, or payment gateways reach saturation, autoscaling application servers won’t help. In fact, scaling stateless services without addressing underlying bottlenecks can overwhelm downstream systems even faster. Additionally, autoscaling delays, cold starts, and misconfigured scaling thresholds frequently cause performance degradation during peak load.

Balance: Autoscaling is a tool – not a strategy. Real scalability comes from understanding upstream and downstream dependencies, load-testing critical paths, implementing backpressure, and designing systems that degrade gracefully.

When to work with an external partner

Organizations typically turn to an external engineering partner when scalability efforts exceed internal capacity or when the cost of failure is simply too high. These scenarios require specialized skills, mature engineering processes, and operational reliability that many in-house teams cannot sustain alone.

- Handling complex infrastructure that stretches internal expertise

When the architecture involves distributed systems, multi-region deployments, event-driven pipelines, or hybrid cloud setups, an external partner can provide architectural guidance and hands-on implementation to avoid costly design and configuration errors.

- Maintaining 24/7 monitoring and incident response

High-traffic ecommerce platforms demand continuous observability, proactive alerting, application performance management, and immediate incident remediation. External teams can provide dedicated SRE/DevOps coverage to keep systems stable around the clock.

- Managing migrations to cloud, headless, or microservices architectures

Replatforming is inherently high-risk. A seasoned partner brings proven migration frameworks, governance, and performance-first engineering practices to ensure smooth transitions with minimal disruption.

- Scaling infrastructure for global markets

Expanding to new regions requires geo-distributed deployments, CDN and edge optimizations, latency reduction strategies, and compliance considerations. External expertise accelerates planning and implementation for global readiness.

- Conducting deep performance engineering and load testing

Many scalability and performance outcomes depend on early ecommerce software development decisions, including platform choice and system design. Simulating peak events, finding bottlenecks, and optimizing for reliability under extreme load often requires specialized tools and skills. External performance engineers can benchmark, test, and validate the platform’s ability to scale before traffic hits.

Neontri – your strategic scalability partner

With more than a decade of experience delivering high-availability platforms, mission-critical fintech systems, and performance-sensitive digital products, Neontri helps organizations build architectures that are not only scalable but predictably stable, cost-efficient, and future-ready. Our teams combine hands-on engineering excellence with a business-first approach, ensuring that every technical decision aligns with measurable ROI.

From designing distributed systems and implementing microservices to orchestrating cloud migrations and headless commerce platforms, Neontri ensures that technical decisions are grounded in both operational excellence and measurable business outcomes. By combining hands-on engineering with a business-first mindset, we help organizations achieve rapid time-to-market, reduce operational risk, and maximize ROI from technology investments.

Conclusion

Scalability and performance are foundational components of any successful ecommerce digital transformation initiative. The path to a scalable e-commerce platform requires deliberate architectural choices – from implementing proper load balancing and content delivery networks to optimizing database queries and checkout processes. Modern e-commerce businesses must balance the complexity of cloud infrastructure with pragmatic ROI considerations, ensuring that technology investments directly support business growth.

Success in e-commerce performance optimization isn’t about implementing every cutting-edge solution, but rather choosing the right scalable solutions for your specific growth trajectory. Whether utilizing serverless functions for image processing or deploying a headless architecture for omnichannel reach, the principles remain consistent: monitor key performance metrics, optimize customer experience, and scale infrastructure to meet customer demand without compromising performance.

Contact us today to assess your platform’s scalability, uncover performance bottlenecks, and implement solutions that drive measurable growth and long-term ROI.

FAQ

How much does it cost to scale e-commerce architecture?

The cost varies significantly based on current size and growth targets.

- Small to medium ($10M-$50M revenue): Infrastructure costs may range from $5k-$20K/month, with implementation costs of $100K-$300K.

- Medium to Enterprise ($50M-$200M revenue): Infrastructure often jumps to $20k-$80K/month, with implementation costing $500K+.

Do we need microservices to scale effectively?

Not necessarily. Microservices are best suited for organizations with large teams (50+ developers) and traffic exceeding 1 million daily users. For smaller operations, a well-tuned modular monolith is often more efficient and cost-effective.

How to measure scalability ROI?

Use the formula: Scalability ROI = (Revenue Gains + Cost Savings – Investment) / Investment * 100. Revenue gains come from improved conversion rates and reduced downtime. Cost savings come from optimized infrastructure (e.g., shutting down unused servers).